How to Choose a Cloud Provider: A Technical Framework for 2026

Choosing a cloud provider is a foundational engineering and business decision that dictates future scalability, operational overhead, and total cost of ownership (TCO). The right platform acts as a strategic accelerator; the wrong one introduces technical debt, unpredictable costs, and architectural constraints. This guide provides a technical framework for evaluating providers, not on marketing hype, but on measurable criteria aligned with your specific requirements.

A Practical Framework for Your Cloud Provider Decision

By 2026, the choice between hyperscalers like AWS, Azure, and Google Cloud is a core business strategy decision. The selection process must be systematic and data-driven, insulated from vendor marketing and focused on your technical and financial realities. The optimal approach begins with a rigorous internal assessment of your architectural, operational, and compliance needs before engaging with any provider.

This framework is designed for technical decision-makers—CTOs, VPs of Engineering, and lead architects. It establishes a repeatable process for defining requirements, performing a technical evaluation, and making a defensible decision that supports long-term growth and financial stability.

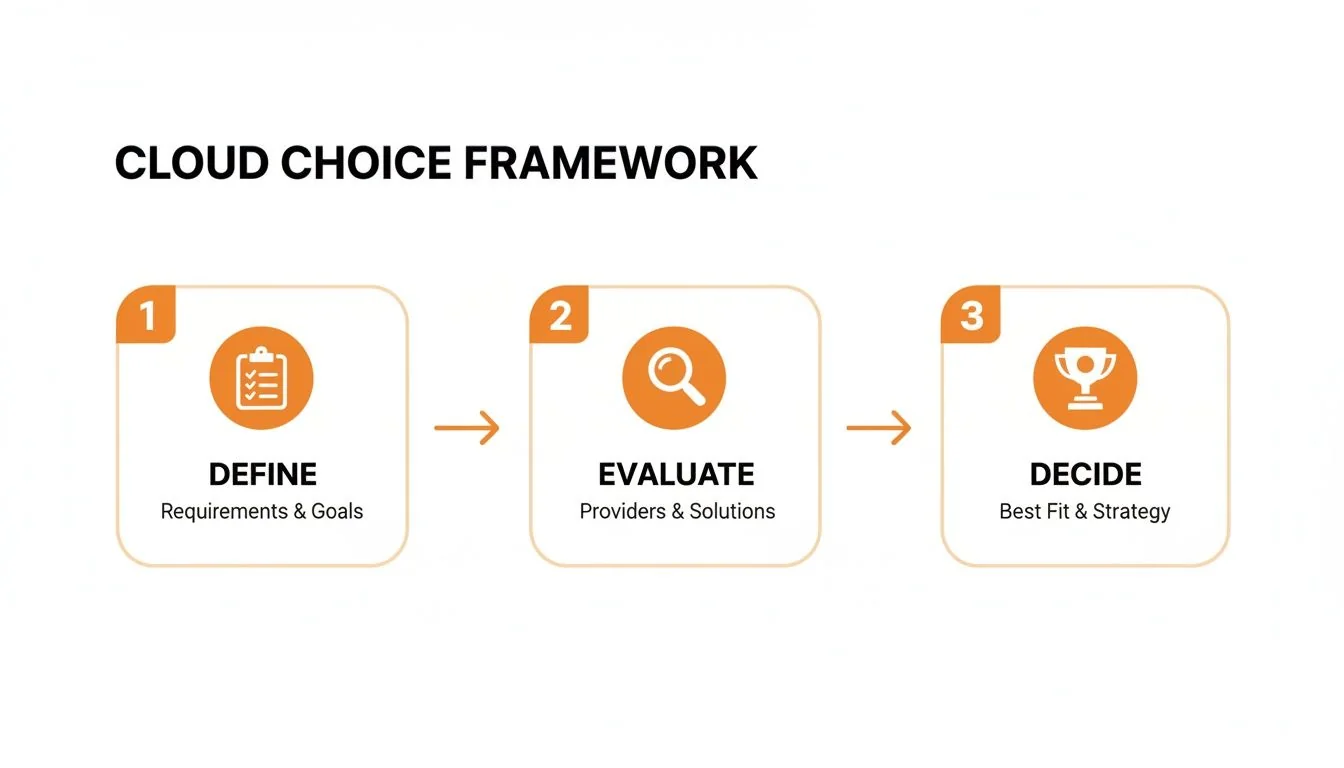

The Three-Step Decision Process

A successful cloud selection process can be distilled into three phases: defining internal requirements, evaluating provider capabilities, and making a data-backed decision. This structured approach prevents premature vendor analysis and ensures the final choice is grounded in your specific context.

Without a precise definition of your organization’s non-negotiable requirements, an objective comparison is impossible.

The following table provides a high-level snapshot of the “Big Three” to frame the initial analysis. Use this as a starting point for mapping their core competencies to your defined needs.

High-Level Cloud Provider Snapshot

| Factor | AWS | Azure | Google Cloud (GCP) |

|---|---|---|---|

| Market Position | Dominant market share leader with the most extensive service portfolio and a mature global ecosystem. Preferred by a diverse range of organizations from startups to large enterprises. | Strong #2 with deep integration into the Microsoft enterprise ecosystem. The default choice for organizations prioritizing hybrid cloud and existing Microsoft licensing investments. | A strong contender with deep engineering roots in containerization, data analytics, and machine learning. Favored by tech-forward, cloud-native organizations. |

| Core Competencies | Breadth of services, serverless computing (Lambda), mature IaaS offerings (EC2), and a vast partner and third-party tool ecosystem. | Hybrid cloud management (Azure Arc), enterprise software integration (Microsoft 365, Active Directory), and robust compliance offerings for regulated industries. | Managed Kubernetes (GKE), serverless data warehousing (BigQuery), AI/ML platform (Vertex AI), and a high-performance global network. |

| Primary Use Cases | General-purpose scalable web applications, large-scale data processing, lift-and-shift enterprise migrations, and workloads requiring a wide variety of service primitives. | Organizations with significant on-premises Windows Server/SQL Server footprint, complex hybrid cloud environments, and industries with stringent compliance needs (e.g., finance, healthcare). | Cloud-native, containerized applications, data-intensive analytics and machine learning workloads, and companies building AI-driven products. |

This overview provides context, but the optimal choice is determined by aligning these provider strengths with your specific technical and business requirements.

Mapping Your Technical and Business Requirements

Jumping directly into a provider comparison without a detailed internal blueprint is a common and costly error. The first step is to create an objective scorecard by mapping your organization’s technical, operational, and financial constraints. This internal audit becomes the definitive yardstick for measuring each potential provider. A 2026 cloud decision must account for future architectural evolution, data governance, and financial guardrails. The goal is to produce a single requirements document that aligns engineering, finance, and leadership.

Defining Your Technical Non-Negotiables

Your application architecture dictates your core cloud requirements. Migrating a monolithic legacy application presents entirely different challenges than deploying a distributed, container-based system.

- Application Architecture: Define your workload’s architectural pattern. Are you executing a lift-and-shift of existing virtual machines? Architecting greenfield, cloud-native microservices on Kubernetes? Or adopting a serverless-first strategy? The answer directly maps to a provider’s strengths. For instance, a fintech startup building an event-driven architecture will heavily scrutinize the maturity and integration of a provider’s serverless functions and messaging queue services.

- Data Sovereignty and Residency: Identify non-negotiable geographical constraints for data storage and processing. For companies with EU customers, GDPR compliance is a baseline requirement. Similarly, U.S. healthcare organizations require a HIPAA-compliant environment. Map your required data center regions and verify provider availability.

- Performance and Latency: Quantify your service-level objectives (SLOs) for application response times and availability. For real-time applications, gaming platforms, or global SaaS products, low latency is a critical feature, not a bonus. Evaluate each provider’s global network backbone, Points of Presence (PoPs), and CDN capabilities against these SLOs.

Aligning on Business and Financial Constraints

With the technical baseline established, layer in the financial and business guardrails by engaging finance and compliance stakeholders. This prevents a common failure mode: engineering selecting a platform that is financially unsustainable or non-compliant.

A credible Total Cost of Ownership (TCO) model must extend beyond compute and storage. It must incorporate data egress fees, premium support plans, and the cost of team upskilling—expenses that are frequently overlooked but have a material impact on the budget.

To build a realistic TCO forecast, quantify these secondary costs:

- Data Egress Fees: All major providers charge for data transferred out of their network. For applications serving large media files or with significant inter-region data replication, egress fees can become a substantial portion of the monthly bill, sometimes rivaling compute costs.

- Premium Support: The basic support tier is inadequate for production systems. Factor in the cost of a business-level or enterprise-level support plan, typically calculated as a percentage of your monthly spend.

- Team Training and Upskilling: Migrating to a new cloud platform requires investment in personnel. Calculate the cost and time required for certifications and training to ensure your team can operate the new environment efficiently and securely.

- Third-Party and Marketplace Tooling: Account for any required security, monitoring, or DevOps tools from the provider’s marketplace that are not included in the core service offerings.

This upfront documentation creates a robust evaluation framework. A fintech startup might prioritize a mature serverless ecosystem and specific regulatory compliance certifications. An enterprise with deep investments in Microsoft technologies will likely weigh a seamless hybrid cloud capability more heavily. This detailed map ensures you choose a partner based on your operational reality, not market noise.

Weighing the Platforms and Their Ecosystems

With your requirements documented, the next phase is a technical deep dive into the “Big Three.” This evaluation focuses on raw technical capabilities, service breadth, and ecosystem maturity, not marketing claims. The objective is to identify the platform whose architecture and operational model best align with your company’s technical DNA.

Market share serves as a useful proxy for platform stability, talent availability, and the breadth of the third-party tool ecosystem. For a CTO or CIO, market leadership often signals a lower-risk, long-term investment.

As of late 2025, AWS maintains its lead in the global cloud market, with Microsoft Azure and Google Cloud as the other dominant players. These three control the vast majority of a market experiencing explosive growth, driven by AI adoption and enterprise migrations. This stability is a key factor for businesses planning to scale globally. A detailed market analysis from Synergy Research Group can be found on Cargoson.com.

Core Compute and Serverless Muscle

Compute services are the foundation of any cloud platform. While seemingly similar, their underlying architecture, performance characteristics, and integration with other services differ significantly.

- AWS offers Amazon EC2, the most mature IaaS offering with an extensive array of instance types. Its serverless platform, AWS Lambda, established the category and possesses the largest ecosystem of event triggers and integrations.

- Azure’s core strength with Azure Virtual Machines lies in hybrid environments. For enterprises with significant Windows Server investments, it provides the path of least resistance. Azure Functions integrates seamlessly into the broader Microsoft software stack, a key advantage for existing enterprise customers.

- Google Cloud provides Compute Engine, known for its high-performance networking and granular per-second billing. However, its standout offering is the Google Kubernetes Engine (GKE), widely regarded by engineers as the gold standard for managed Kubernetes due to its operational maturity and advanced features.

The choice often reflects a core philosophy. AWS provides the most comprehensive toolbox, with a service for nearly every conceivable use case. Azure offers the most seamless on-ramp for Microsoft-centric enterprises. Google Cloud delivers a highly polished, developer-centric platform optimized for modern, containerized architectures.

Finding the Right Fit: Specializations and Ecosystems

Beyond foundational services, each provider has established deep specializations. Aligning your specific needs with these specializations is critical for success. The right platform should feel like a natural extension of your team’s existing skills and architectural patterns.

A detailed analysis like this AWS vs. Azure vs. GCP comparison is necessary to uncover the subtle but critical differences that impact daily operations and future capabilities. For side-by-side evaluations of specific consulting firms on each platform, see our head-to-head partner comparisons.

Consider these real-world scenarios:

- Global E-commerce Platform (SME): A retailer requiring low-latency service for millions of users. AWS is a strong candidate due to its vast global infrastructure, mature CDN (CloudFront), and diverse database offerings (DynamoDB for NoSQL, Aurora for relational workloads) that can support everything from real-time inventory to AI-driven recommendation engines.

- Regulated Healthcare Company (Enterprise): A hospital system migrating to the cloud while maintaining on-premises systems and adhering to strict data governance. Azure is often the preferred choice. Its hybrid capabilities via Azure Arc are best-in-class, and Microsoft has invested heavily in meeting compliance standards like HIPAA. Native integration with Active Directory simplifies identity and access management.

- AI-Driven FinTech Startup: A startup developing complex financial models requiring intensive data processing and ML capabilities. Google Cloud is purpose-built for this workload. Its leadership in data analytics (BigQuery) and AI/ML (Vertex AI), combined with the robustness of GKE, creates an optimal environment for building and scaling intelligent, cloud-native applications.

These examples illustrate a key principle: there is no single “best” cloud. The correct choice is the one whose strengths amplify your team’s effectiveness and your application’s architecture. If you’re unsure which provider fits your situation, a short cloud matching quiz can help narrow the field. The goal is not to pick a winner, but to select the optimal partner for the specific job.

Getting Real About TCO and Cloud Costs

After technical evaluation, the decision to choose a cloud provider often hinges on cost. However, list prices for virtual machines are misleading. A realistic Total Cost of Ownership (TCO) requires a deep analysis of complex pricing models and a clear understanding of potential hidden costs.

Provider scale is a direct indicator of their ability to invest in R&D and offer competitive pricing. In Q3 2025, the global cloud infrastructure market hit $107 billion, a 28% year-over-year increase driven by AI compute demand. AWS leads with 29% market share, while Azure’s consistent 20% share reflects its enterprise strength. These figures, reported by outlets like CRN.com, demonstrate the capital investment and pricing power of the major players.

Cracking the Code on Cloud Pricing Models

An accurate TCO model must be built on a clear understanding of the primary billing models offered by AWS, Azure, and GCP. Misaligning your workload with a pricing model is a common and expensive mistake.

- Pay-As-You-Go (On-Demand): The most flexible but also the most expensive option. Ideal for unpredictable workloads, development/testing environments, or short-term projects without a usage commitment.

- Reserved Instances (RIs) & Savings Plans: The primary mechanism for significant cost reduction, offering discounts up to 70% or more for one- or three-year commitments. RIs are typically tied to specific instance families in a region, while Savings Plans offer more flexibility by committing to a specific hourly spend. These are essential for steady-state production workloads.

- Spot Instances/VMs: Leverages a provider’s spare compute capacity at discounts of up to 90%. The trade-off is that these instances can be reclaimed by the provider with minimal notice. Spot is highly effective for fault-tolerant, stateless workloads such as batch processing, data analysis, and CI/CD pipelines.

The fundamental principle of cloud cost management is to match the predictability of your workload to the appropriate pricing model. Running a stable, 24/7 production database on an expensive On-Demand instance when a three-year Reserved Instance could reduce its cost by over 60% is a classic FinOps failure.

Finding the “Hidden” Costs Before They Find You

A TCO calculation that only includes compute and storage is fundamentally flawed. Significant costs are often buried in ancillary services. Adopting a FinOps mindset from day one is critical to avoiding budget overruns.

Monitor these common sources of unexpected costs:

| Hidden Cost Category | Why It Matters |

|---|---|

| Data Transfer Fees | Providers charge for data egress (data moving out of their network). For applications with high outbound traffic (e.g., video streaming) or significant cross-region replication, egress fees can become a top-line expense. |

| API Call Charges | Many services, particularly object storage like S3 or Blob Storage, bill per API request (GET, PUT, LIST). An inefficiently designed application can generate millions of API calls, leading to significant and unexpected charges. |

| Premium Support Tiers | The free support tier is insufficient for production environments. Business or Enterprise support is a necessary operational expense, typically priced as a percentage of your total monthly spend, representing a significant recurring cost. |

| Logging & Monitoring | Services like AWS CloudWatch, Azure Monitor, and Google’s operations suite charge for data ingestion, storage, and custom metrics. Comprehensive observability is non-negotiable but must be budgeted for. |

For a more granular analysis of cost control, see our guide on advanced cloud cost optimization strategies.

It’s About Tools and Culture

Each provider offers a suite of cost management tools. AWS Cost Explorer, Azure Cost Management + Billing, and the Google Cloud Billing console are essential for monitoring spend, setting budgets, and identifying optimization opportunities.

However, tools alone are insufficient. Effective, long-term cost management requires a FinOps culture that fosters collaboration between engineering, finance, and business units. For a startup, this might be a weekly review of the cost dashboard. For a large enterprise, it means dedicated FinOps teams managing reservations, negotiating enterprise agreements, and enforcing cost allocation tagging policies. A credible cost-based decision must look beyond public pricing calculators and model your specific usage patterns and operational realities.

Diving Into Security Posture and Compliance Controls

Choosing a cloud provider is an exercise in trust. You are outsourcing the physical security of your infrastructure. In 2026, a provider’s security posture and compliance certifications are not marketing features; they are foundational to your business’s resilience and reputation. The evaluation must be a critical audit of their shared responsibility model, the efficacy of their native security tools, and verifiable proof of compliance with relevant industry and government standards.

It’s a Shared Responsibility, But Where’s the Line?

All major providers operate on a shared responsibility model: they are responsible for the security of the cloud (physical data centers, network infrastructure, hypervisor), while you are responsible for security in the cloud (your data, IAM configurations, application-level security).

The precise location of this dividing line varies between services and providers.

A common failure pattern is assuming the provider is managing a security control that falls on the customer’s side of the line. A misconfigured storage bucket that exposes sensitive data is a customer failure, not a provider failure. Your selection process must include a detailed review of each provider’s model to ensure your team possesses the skills and tools to fulfill your responsibilities.

Auditing the Alphabet Soup of Compliance

Vendor claims of compliance are insufficient; you must verify them with audit reports. For any technical leader, this means identifying your non-negotiable compliance standards and demanding the corresponding documentation.

Key certifications to verify:

- SOC 2 Type II: A critical audit of a provider’s controls over time, covering security, availability, processing integrity, confidentiality, and privacy. The absence of this report is a major red flag.

- ISO 27001: The international standard for information security management systems (ISMS), proving a formal, risk-based security program is in place.

- PCI DSS: A mandatory requirement for any organization that processes, stores, or transmits credit card data. Verification of Level 1 compliance is non-negotiable for e-commerce and fintech companies.

- HIPAA: For U.S. healthcare organizations, the provider must be willing to sign a Business Associate Agreement (BAA) and provide evidence of a HIPAA-compliant environment.

Providers like AWS, Azure, and Google Cloud make these audit reports available to customers via their portals. Your security and compliance teams should review these documents directly to ensure they meet your specific requirements.

Beyond the Certs: Native Tools and Data Residency

Compliance certifications are the baseline. The quality and integration of a provider’s native security tooling are what determine your team’s operational effectiveness.

This requires hands-on evaluation. Compare the identity and access management services: AWS IAM vs. Azure Active Directory vs. Google Cloud IAM. Assess their managed threat detection services (e.g., Amazon GuardDuty, Microsoft Defender for Cloud, Google Security Command Center). Determine which toolset best integrates with your team’s existing workflows and expertise.

Data residency is another critical factor, particularly with regulations like GDPR. A provider’s global data center footprint directly impacts your ability to comply with data sovereignty laws. AWS’s extensive network of global regions is ideal for multi-region disaster recovery, while Azure’s deep presence in Europe often makes it a strong choice for companies with strict GDPR obligations.

Market share data reflects these specializations. As of 2025, AWS’s 30% global share is built on its broad service portfolio. Azure’s 20% is powered by its strength in regulated industries and hybrid enterprise. Google Cloud’s 12-13% share is driven by its dominance in data, AI, and developer tooling. The Big Three’s combined 63%+ market control, as detailed in reports like this one from HG Insights, was earned through years of investment in these capabilities. Match your company’s risk profile to the provider whose strengths offer the most direct alignment.

8. Run a Proof of Concept That Delivers Clarity

Documentation and demos provide theoretical knowledge; a Proof of Concept (POC) provides empirical evidence. A well-designed POC is the most effective way to validate a provider’s capabilities against your specific workloads, moving your evaluation from spreadsheets to real-world deployment.

The scope of a POC should be narrow and focused. Do not attempt to replicate your entire infrastructure. Select one or two representative workloads that are critical to your business and technically demanding—a high-throughput API, a data-intensive analytics pipeline, or the core e-commerce transaction flow.

Deploying this workload slice will force your team to engage directly with the provider’s core platform services, from IAM and networking to compute and storage. This process uncovers the operational friction and “gotchas” that are absent from marketing materials.

What Does Success Look Like? Defining Your Criteria

A POC without predefined, measurable success criteria is an inefficient use of resources. Vague objectives like “test performance” are useless. Define specific, quantifiable metrics that map directly to your business and technical requirements.

Key evaluation criteria for a POC:

- Real-World Performance: Can the platform meet your SLOs under realistic load? Provision a test environment and measure key metrics like API response times, database query latency for complex reports, or the execution time for a batch data processing job. A well-defined goal is actionable: “The platform must maintain a p95 latency below 200ms for the core checkout API under a simulated peak traffic load of 10,000 requests per minute.”

- Developer Experience (DX) & Deployment Friction: Measure the time and effort required for your engineers to deploy the test workload from scratch. This provides a direct assessment of the provider’s documentation quality, CLI/SDK usability, and the overall developer experience. A significant difference in deployment time between providers is a strong signal of future operational overhead.

- Support Responsiveness: Use the POC to test the provider’s support organization. Open a standard support ticket for a non-urgent, technical question. Separately, use a premium support channel to escalate a more complex issue. Evaluate response time, the technical competence of the support engineers, and the efficiency of the escalation process.

9. Make the Final Call with a Decision Matrix & Red-Flag Checklist

After the POC, you will have a mix of quantitative data (performance metrics, cost estimates) and qualitative feedback (developer experience). To synthesize this information and mitigate personal bias, use a weighted decision matrix.

This tool forces a systematic and objective comparison of each provider against your predefined criteria. It transforms subjective opinions into a structured, data-driven decision.

The decision matrix serves as the single source of truth for the final selection. It requires all stakeholders, from the CFO to the lead engineer, to justify their positions with data derived from the POC, the TCO model, and the security audit. It elevates the conversation from “I prefer this platform” to “This platform is the optimal business and technical choice.”

Adapt this template for your evaluation.

Cloud Provider Decision Matrix Template

A weighted scoring template to objectively compare your shortlisted cloud providers across critical business and technical criteria.

| Evaluation Criteria | Weight (%) | Provider A Score (1-5) | Provider B Score (1-5) | Notes & Justification |

|---|---|---|---|---|

| Technical & Performance | 40% | |||

| POC Performance Metrics | 15% | e.g., Met 200ms p95 latency goal. | ||

| Platform Services & Features | 10% | e.g., Native support for our DB engine. | ||

| Developer Experience (DX) | 10% | e.g., Team found CLI clunky. | ||

| Scalability & Reliability | 5% | e.g., Better auto-scaling options. | ||

| Financial | 30% | |||

| Total Cost of Ownership (TCO) | 20% | e.g., 15% cheaper over 3 years. | ||

| Pricing Model Flexibility | 10% | e.g., More favorable reserved instance terms. | ||

| Support & Partnership | 15% | |||

| Quality of Technical Support | 10% | e.g., Fast and expert POC support response. | ||

| Partner Ecosystem & Expertise | 5% | e.g., Certified local partners available. | ||

| Security & Compliance | 15% | |||

| Compliance Certifications | 10% | e.g., Holds crucial industry certs (HIPAA). | ||

| Security Tooling & Features | 5% | e.g., Superior native WAF capabilities. | ||

| TOTAL | 100% | [Total Score] | [Total Score] |

By executing a methodical POC and using a decision matrix to analyze the results, you can make a final selection with high confidence, ensuring it aligns with both your immediate technical needs and long-term business objectives.

Frequently Asked Questions

Even with a structured evaluation, several strategic questions frequently arise during the cloud provider selection process. Here are answers to common concerns from technical leaders.

How Much Should I Worry About Vendor Lock-In?

Vendor lock-in is a legitimate architectural risk, but its severity is manageable. The most effective mitigation strategy is to build on open standards, primarily Kubernetes.

Using managed container orchestrators like GKE on Google Cloud or EKS on AWS ensures workload portability at the container level. Additionally, architect your applications to decouple core business logic from provider-specific managed services where feasible.

However, do not let fear of lock-in prevent the strategic use of powerful managed services that reduce operational burden. A managed database like AWS RDS or Azure SQL can eliminate significant undifferentiated heavy lifting. The key is to be deliberate: leverage proprietary services for commodity functions where the operational benefits are clear, but maintain platform-agnosticism in your own application code.

Is a Multi-Cloud Strategy Really Better?

A multi-cloud strategy can be effective for specific use cases, such as leveraging best-of-breed services from different providers (e.g., primary applications on AWS, ML workloads on Google Cloud) or achieving high levels of resiliency.

However, this approach introduces significant operational complexity. It requires managing disparate security models, networking configurations, identity systems, and cost controls across distinct ecosystems. This overhead is substantial.

For most small to medium-sized businesses and many enterprises, standardizing on a single primary provider is more practical and cost-effective. A multi-cloud architecture is best suited for large enterprises with mature DevOps and FinOps teams that have a specific, justifiable business or technical driver that outweighs the inherent complexity. Do not adopt multi-cloud for its own sake.

What Is the Role of a Cloud Consulting Partner?

An experienced cloud consulting partner acts as a force multiplier. They provide unbiased, specialized expertise gained from numerous migrations, which is difficult to replicate internally.

Key areas where a partner adds value:

- Building an accurate TCO model: They possess deep knowledge of pricing models and hidden costs, resulting in a more realistic financial forecast.

- Running a meaningful POC: They help design and execute tests that validate critical requirements and produce actionable data.

- Accelerating migration: They provide technical expertise and project management to accelerate the migration process while avoiding common pitfalls.

When vetting potential partners, verify their advanced certifications on your target platforms, request case studies from your industry, and ensure their engagement model and cost structure are transparent. Our independent partner ranking can serve as a useful starting point for building your shortlist.

Finding the right expertise is crucial. At CloudConsultingFirms.com, we provide data-driven comparisons of top cloud consulting partners, helping you choose the right team to guide your cloud journey with confidence. Explore our independent 2026 guide and find your ideal partner.

Peter Korpak

Chief Analyst & Founder

Data-driven market researcher with 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse. Analyzed 200+ verified cloud projects (migrations, implementations, optimizations) to build Cloud Intel.

Connect on LinkedInContinue Reading

Insights

Explore our complete insights hub

AWS vs Azure vs GCP: A Strategic Comparison

Explore our strategic comparison of AWS vs Azure vs GCP. This guide provides actionable insights for choosing the right cloud platform for your business needs.

The 12 Best Multi Cloud Management Platforms for 2026 and Beyond

Discover the best multi cloud management platforms for 2026. Get an actionable, no-fluff comparison of features, pricing, and use cases.

Stay ahead of cloud consulting

Quarterly rankings, pricing benchmarks, and new research — delivered to your inbox.

No spam. Unsubscribe anytime.